A large Google Health Artificial Intelligence (AI) data trial produced a 5.7% reduction of breast cancer false positives in the US, and a 1.2% reduction in the UK, as well as a 9.4% reduction in false negatives in the US, and a 2.7% reduction in the UK.

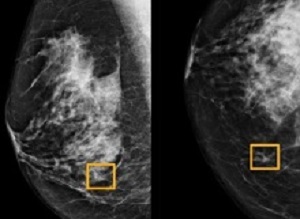

In November, Google Health detailed its mission to “help everybody live their healthiest life.” The division has now published “initial findings” on using artificial intelligence (AI) to improve breast cancer screening. Google notes how “spotting and diagnosing breast cancer early remains a challenge.” Detection today is performed through digital mammography, but reading breast x-ray images is a “difficult task, even for experts, and can often result in both false positives and false negatives.”

In turn, these inaccuracies can lead to delays in detection and treatment, unnecessary stress for patients, and a higher workload for radiologists who are already in short supply.

The company’s solution involves applying artificial intelligence. Findings that were conducted over the past two years have been published and “show that our AI model spotted breast cancer in de-identified screening mammograms (where identifiable information has been removed) with greater accuracy, fewer false positives, and fewer false negatives than experts.”

It follows works in 2017 on detecting metastatic breast cancer from lymph node specimens, and deep learning algorithms that help doctors spot breast cancer.

Google Health collaborated with its Alphabet division DeepMind, Cancer Research UK Imperial Centre, Northwestern University, and Royal Surrey County Hospital to “see if artificial intelligence could support radiologists to spot the signs of breast cancer more accurately.”

“In this evaluation, our system produced a 5.7% reduction of false positives in the US, and a 1.2% reduction in the UK. It produced a 9.4% reduction in false negatives in the US, and a 2.7% reduction in the UK.”

What’s notable is how the AI system didn’t have access to patient histories and previous mammograms, like doctors would normally use. The model was trained from de-identified mammograms of 76,000 women in the UK and 15,000 women in the US.

“In an independent study of six radiologists, the AI system outperformed all of the human readers: the area under the receiver operating characteristic curve (AUC-ROC) for the AI system was greater than the AUC-ROC for the average radiologist by an absolute margin of 11.5%.”

What’s next is more research, as well as “prospective clinical studies and regulatory approval” of how AI could aid in breast cancer detection. In the “coming years,” Google hopes to translate “machine learning research into tools that benefit clinicians and patients.”

The findings of the study represent a major advance in the potential for the early detection of breast cancer, Mozziyar Etemadi, one of its co-authors from Northwestern Medicine in Chicago, said.

Reuters Health quotes Connie Lehman, chief of the breast imaging department at Harvard’s Massachusetts General Hospital, as saying that the results are in line with findings from several groups using AI to improve cancer detection in mammograms, including her own work.

The report says the notion of using computers to improve cancer diagnostics is decades old, and computer-aided detection (CAD) systems are commonplace in mammography clinics, yet CAD programs have not improved performance in clinical practice. The issue, Lehman said, is that current CAD programs were trained to identify things human radiologists can see, whereas with AI, computers learn to spot cancers based on the actual results of thousands of mammograms.

This has the potential to “exceed human capacity to identify subtle cues that the human eye and brain aren’t able to perceive,” Lehman added.

Although computers have not been “super helpful” so far, “what we’ve shown at least in tens of thousands of mammograms is the tool can actually make a very well-informed decision,” Etemadi said.

Chris Kelly, a clinician scientist at Google Health, said in a report in The Guardian that the next major step would be a trial to assess the AI in real-world conditions. Its performance could slip when it is fed images from different mammogram systems.

“Like the rest of the health service, breast imaging, and UK radiology more widely, is understaffed and desperate for help,” said Dr Caroline Rubin, vice-president for clinical radiology at the Royal College of Radiologists. “AI programs will not solve the human staffing crisis, as radiologists and imaging teams do far more than just look at scans, but they will undoubtedly help by acting as a second pair of eyes and a safety net.”

“It is a competitive market for developers and these programs will need to be rigorously tested and regulated first. The next step for promising products is for them to be used in clinical trials, evaluated in practice and used on patients screened in real-time, a process that will need to be overseen by the UK public health agencies that have overall responsibility for the breast screening programmes.”

Michelle Mitchell, Cancer Research UK’s CEO, is quoted in the report as saying: “Screening helps diagnose breast cancer at an early stage, when treatment is more likely to be successful, ensuring more people survive the disease. But it also has harms such as diagnosing cancers that would never have gone on to cause any problems and missing some cancers. This is still early stage research, but it shows how AI could improve breast cancer screening and ease pressure off the NHS.”

Abstract

Screening mammography aims to identify breast cancer at earlier stages of the disease, when treatment can be more successful1. Despite the existence of screening programmes worldwide, the interpretation of mammograms is affected by high rates of false positives and false negatives2. Here we present an artificial intelligence (AI) system that is capable of surpassing human experts in breast cancer prediction. To assess its performance in the clinical setting, we curated a large representative dataset from the UK and a large enriched dataset from the USA. We show an absolute reduction of 5.7% and 1.2% (USA and UK) in false positives and 9.4% and 2.7% in false negatives. We provide evidence of the ability of the system to generalize from the UK to the USA. In an independent study of six radiologists, the AI system outperformed all of the human readers: the area under the receiver operating characteristic curve (AUC-ROC) for the AI system was greater than the AUC-ROC for the average radiologist by an absolute margin of 11.5%. We ran a simulation in which the AI system participated in the double-reading process that is used in the UK, and found that the AI system maintained non-inferior performance and reduced the workload of the second reader by 88%. This robust assessment of the AI system paves the way for clinical trials to improve the accuracy and efficiency of breast cancer screening.

Authors

Scott Mayer McKinney, Marcin Sieniek, Varun Godbole, Jonathan Godwin, Natasha Antropova, Hutan Ashrafian, Trevor Back, Mary Chesus, Greg C Corrado, Ara Darzi, Mozziyar Etemadi, Florencia Garcia-Vicente, Fiona J Gilbert, Mark Halling-Brown, Demis Hassabis, Sunny Jansen, Alan Karthikesalingam, Christopher J Kelly, Dominic King, Joseph R Ledsam, David Melnick, Hormuz Mostofi, Lily Peng, Joshua Jay Reicher, Bernardino Romera-Paredes, Richard Sidebottom, Mustafa Suleyman, Daniel Tse, Kenneth C. Young, Jeffrey De Fauw, Shravya Shetty

[link url="https://9to5google.com/2020/01/01/google-ai-breast-cancer/"]9to5 Google report[/link]

[link url="https://www.nature.com/articles/s41586-019-1799-6"]Nature abstract[/link]

[link url="https://uk.reuters.com/article/us-health-mammograms-ai/study-finds-google-system-could-improve-breast-cancer-detection-idUKKBN1Z0206"]Reuters Health report[/link]

[link url="https://www.theguardian.com/society/2020/jan/01/ai-system-outperforms-experts-in-spotting-breast-cancer"]The Guardian report[/link]