A team of psychologists at the University of California has now developed a free test to measure reasoning ability in undergraduates that takes roughly 10 minutes to complete and is 'highly, comparable' to the widely used Raven's Advanced Progressive Matrices (APM) test.

A team of psychologists at the University of California has now developed a free test to measure reasoning ability in undergraduates that takes roughly 10 minutes to complete and is 'highly, comparable' to the widely used Raven's Advanced Progressive Matrices (APM) test.

Raven's Advanced Progressive Matrices, or APM, is a widely used standardised test to measure reasoning ability, often administered to undergraduate students. One drawback, however, is that the test, which has been in use for about 80 years, takes 40 to 60 minutes to complete. Another is that the test kit and answer sheets can cost hundreds of dollars, this amount increasing with more people taking the test.

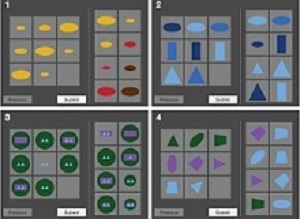

A team of psychologists at University of California – Riverside (UCR) and University of California – Irvine (UCI) has now developed a highly comparable free test that takes roughly 10 minutes to complete. Called the University of California Matrix Reasoning Task, or UCMRT, the user-friendly test measures abstract problem-solving ability and works on tablets and other mobile devices.

The researchers report that UCMRT, which they tested on 713 undergraduate students at UC Riverside and UC Irvine, is a reliable and valid measure of nonverbal problem solving that predicts academic proficiency and measures "fluid intelligence" – intelligence that is not dependent on pre-existing knowledge and is linked to reasoning and problem solving. Like APM, the test differentiates among people at the high end of intellectual ability. Compared to APM, the UCMRT offers three alternate versions, allowing the test to be used three times by the same user.

"Performance on UCMRT correlated with a math test, college GPA, as well as college admissions test scores," said Anja Pahor, a postdoctoral researcher who designed UCMRT's problems and works at both UC campuses. "Perhaps the greatest advantage of UCMRT is its short administration time. Further, it is self-administrable, allowing for remote testing. Log files instantly provide the number of problems solved correctly, incorrectly, or skipped, which is easily understandable for researchers, clinicians, and users. Unlike standard paper and pencil tests, UCMRT provides insight into problem-solving patterns and reaction times."

Along with many psychologists using Raven's APM, Pahor and her co-authors – UCR's Aaron R Seitz and Trevor Stavropoulos; and UCI's Susanne M Jaeggi – realised that APM is not well suited for their studies given its cost, the time it takes to complete the test, and a lack of alternative, cross-validated forms in which participants are confronted with novel questions each time they take the test. So, the psychologists opted to create their own version of the test.

"UCMRT predicts standardised test scores better than Raven's APM," said Seitz, a professor of psychology, director of the Brain Game Centre at UCR, and Pahor's mentor. "Intelligence tests are big-money operations. Companies that create the tests often levy a hefty charge for their use, an impediment to doing research. Our test, available for free, levels the playing field for a vast number of researchers interested in using it. We are already working to make UCMRT better than it already is. Technology has changed over the decades that APM has been around, and people's expectations have changed accordingly. It's important to have tests that reflect these changes and respond in a timely manner to them."

Pahor said UCMRT has only 23 problems for users to solve (APM, in contrast, has 36), yet delivers measurements that are as good as those from APM. "Of the 713 students who took UCMRT, about 230 students took both tests," she said. "UCMRT correlates with APM about as well as APM correlates with itself."

Jaeggi, who also mentors Pahor and is an associate professor in the School of Education at UCI where she leads the Working Memory and Plasticity Laboratory, adds, "The way we set up and designed UCMRT allows the inclusion of variants that can be used for populations across the lifespan from young children to older adults. The problems we designed are visually appealing, making it easy to motivate participants to complete the task. Furthermore, there are minimal verbal instructions and participants can figure out what to do by completing the practice problems."

Although UCMRT instructions are currently in English, the test can be easily localised to include relevant translations, Seitz said.

"We are motivated by helping the scientific community and want to create versions of UCMRT for different age groups and abilities," he added. "This test could help with early intervention programmes. We are already working on a project with California State University at San Bernardino to move forward with that."

UCMRT is largely based on matrix problems generated by Sandia National Laboratories. Most of the test's matrices are inspired by those produced by the lab. Stavropoulos at the UCR Brain Game Centre programmed UCMRT's problems.

Abstract

Many cognitive tasks have been adapted for tablet-based testing, but tests to assess nonverbal reasoning ability, as measured by matrix-type problems that are suited to repeated testing, have yet to be adapted for and validated on mobile platforms. Drawing on previous research, we developed the University of California Matrix Reasoning Task (UCMRT)—a short, user-friendly measure of abstract problem solving with three alternate forms that works on tablets and other mobile devices and that is targeted at a high-ability population frequently used in the literature (i.e., college students). To test the psychometric properties of UCMRT, a large sample of healthy young adults completed parallel forms of the test, and a subsample also completed Raven’s Advanced Progressive Matrices and a math test; furthermore, we collected college records of academic ability and achievement. These data show that UCMRT is reliable and has adequate convergent and external validity. UCMRT is self-administrable, freely available for researchers, facilitates repeated testing of fluid intelligence, and resolves numerous limitations of existing matrix tests.

Authors

Anja Pahor, Trevor Stavropoulos, Susanne M Jaeggi, Aaron RSeitz

[link url="https://www.sciencedaily.com/releases/2018/10/181029131008.htm"]University of California – Riverside material[/link]

[link url="https://link.springer.com/article/10.3758%2Fs13428-018-1152-2"]Behaviour Research Methods abstract[/link]